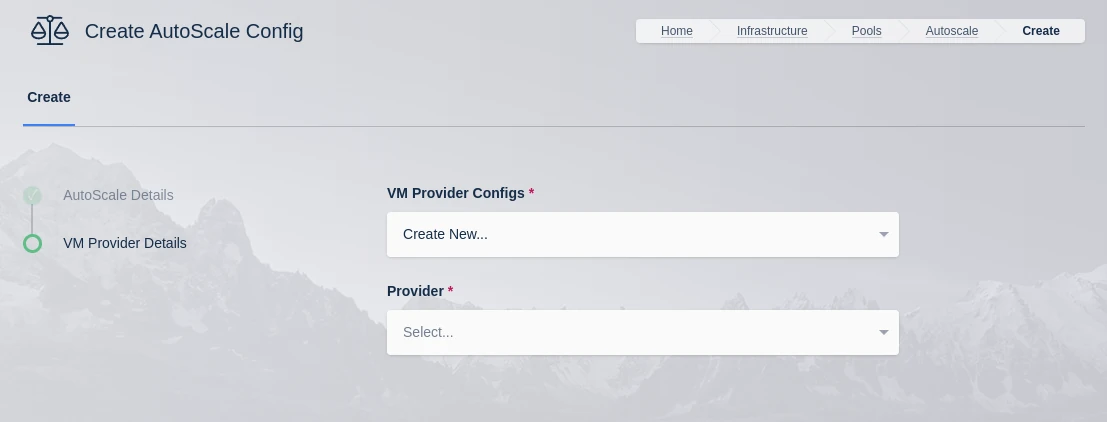

VM Provider Configs

Note

AutoScaling is available in the Community and Enterprise editions only. For more information on licensing please visit: Licensing.

Create New Provider

Name |

Description |

VM Provider Configs |

Select an existing config or create a new config. If selecting an existing config and changing any of the details, those details will be changed for anything using the same VM Provider config. |

Provider |

Select a provider from AWS, Azure, Digital Ocean, Google Cloud or Oracle Cloud. If selecting an existing provider this will be selected automatically. |

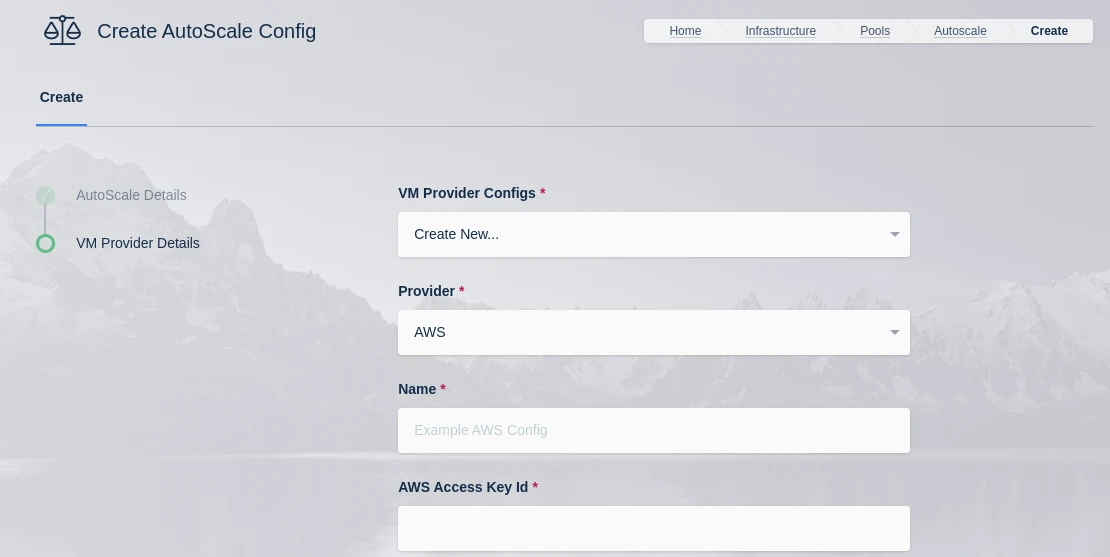

AWS Settings

A number of settings are required to be defined to use this functionality.

AWS Settings

Name |

Description |

Name |

A name to use to identify the config. |

AWS Access Key ID |

The AWS Access Key used for the AWS API. If the value {IAM} is defined, the service will query the local metadata instance on the Webapp host to utilize a an instance attached IAM Role. |

AWS Secret Access Key |

The AWS Secret Access Key used for the AWS API. This value is ignored if {IAM} is defined for AWS Access Key ID |

AWS: Region |

The AWS Region the EC2 Nodes should be provisioned in. e.g (us-east-1) |

AWS: EC2 AMI ID |

The AMI ID to use for the provisioned EC2 nodes. This should be an OS that is supported by the Kasm installer. |

AWS: EC2 Instance Type |

The EC2 Instance Type (e.g t3.micro). Note the Cores and Memory override settings don’t necessarily have to match the instance configurations. This is to allow for over provisioning. |

AWS: Max EC2 Nodes |

The maximum number of EC2 nodes to provision regardless of the need for available free slots |

AWS: EC2 Security Group IDs |

A Json list containg security group IDs to assign the EC2 nodes. e.g |

AWS: EC2 Subnet ID |

The subnet ID to place the EC2 nodes in. |

AWS: EC2 EBS Volume Size (GB) |

The size of the root EBS Volume for the EC2 nodes. |

AWS: EC2 EBS Volume Type |

The EBS Volume Type (e.g gp2) |

AWS: EC2 IAM |

The IAM to assign the EC2 Nodes. Administrators may want to assign CloudWatch IAM access. |

AWS: EC2 Custom Tags |

A Json dictionary for custom tags to assigned on auto-scaled Agent EC2 Nodes. e.g |

AWS: EC2 Startup Script |

When the EC2 Nodes are provision this script is executed. The script is responsible for installing and configuring the Kasm Agent. |

Retrieve Windows VM Password from AWS |

When provisioning an AWS Windows VM Kasm can retrieve the password generated by AWS and store it in the Server configuration record created during the autoscale provision. This will only happen if the Connection Password field from the attached Autoscale config is blank. When populated Kasm will use the defined value instead of what is returned from AWS. The Administrator may want to leave this field blank and disable retrieving the password from AWS if they wish the Kasm user to be presented with a login screen to manually enter credentials upon connecting to the Windows Workspace. NOTE: This setting only affects Windows (RDP connection type) AWS instances. |

SSH Keys |

The SSH Key pair to assign the EC2 node |

AWS Config Override (JSON) |

Custom configuration may be added to the provision request for advanced use cases. Instance configuration is overridden in the ‘instance_config’ configuration block e.g.

|

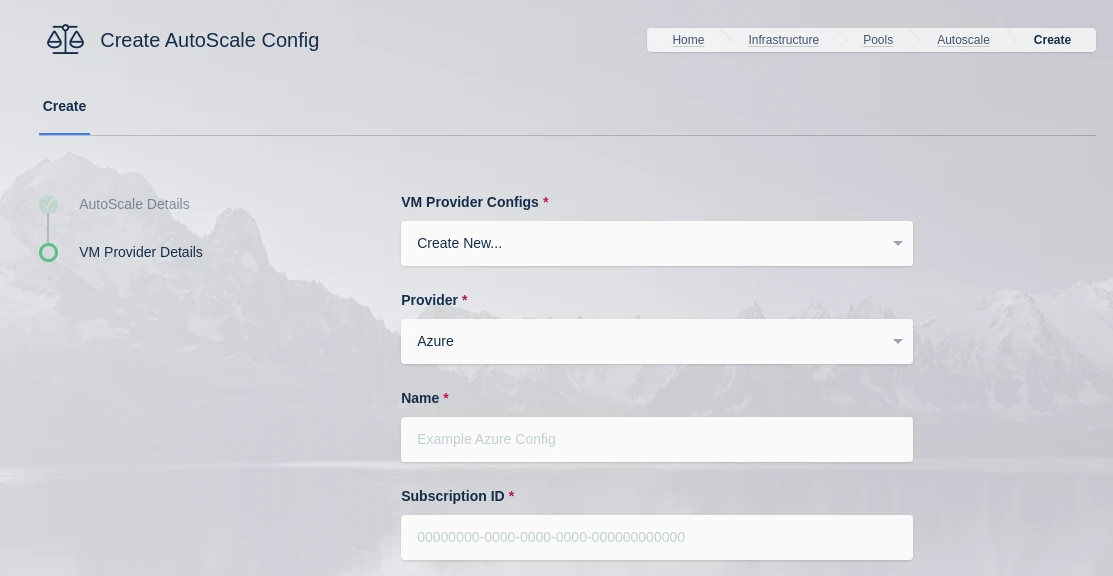

Azure Settings

A number of settings are required to be defined to use this functionality. The Azure settings appear in the Deployment Zone configuration when the feature is licensed.

Azure Settings

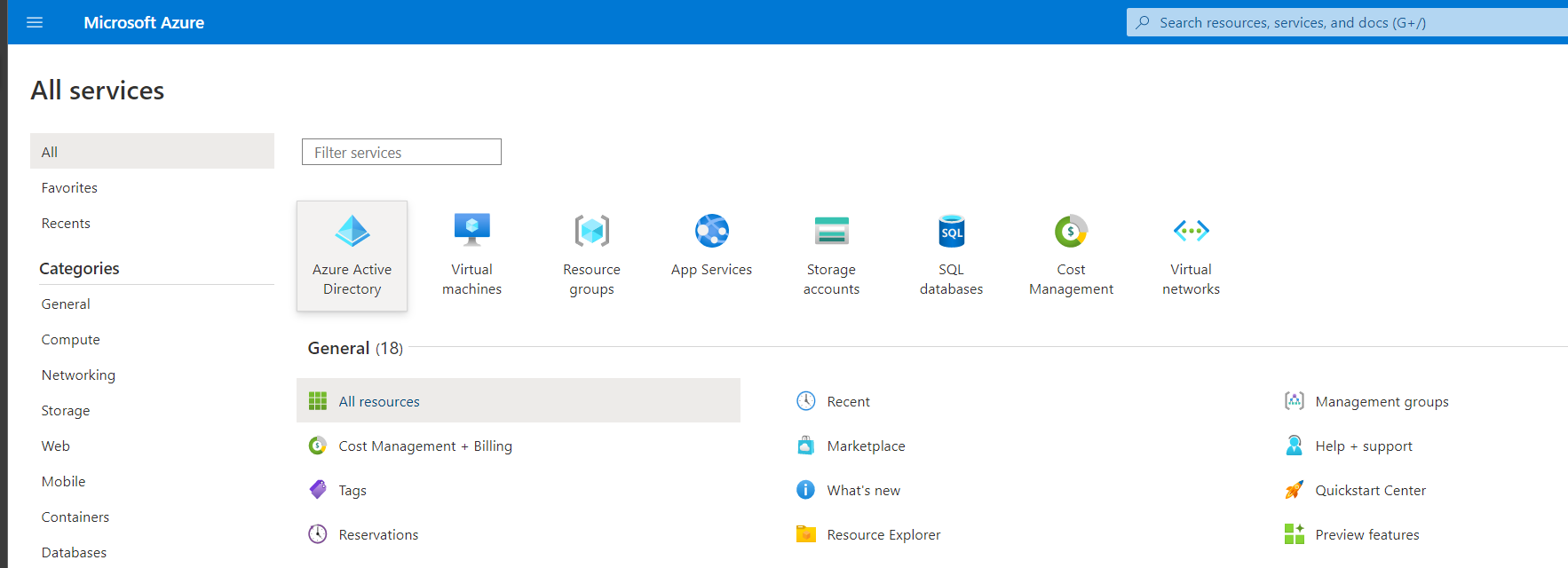

Register Azure app

An API key for Kasm must be created to use to interface with Azure. Azure call these apps, and the example will walk through registering one along with the required permissions.

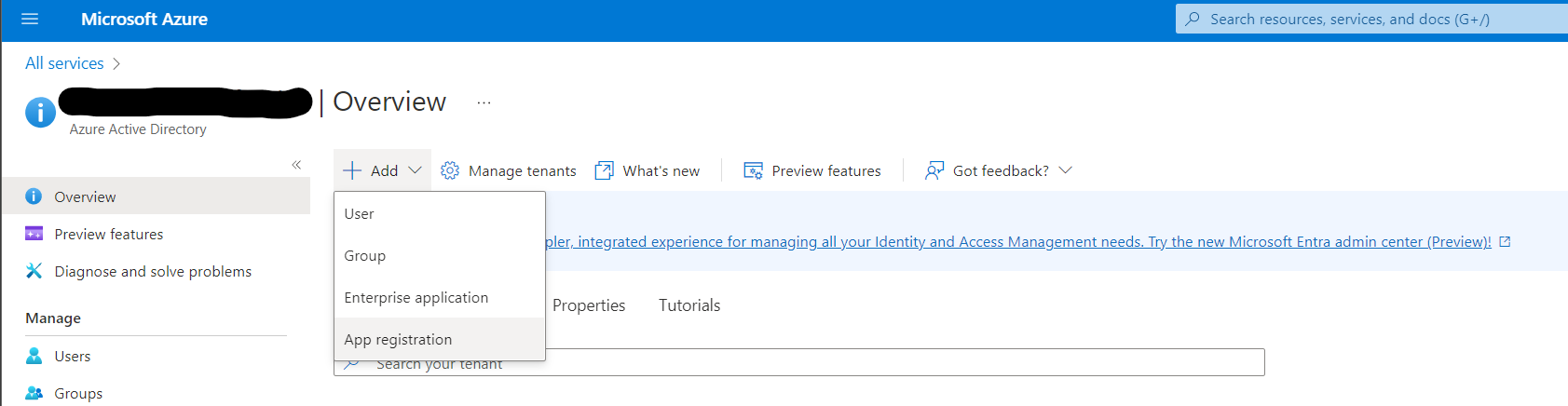

Register an app by going to the Azure Active Directory service in the Azure portal.

Azure Active Directory

From the Add dropdown select App Registration

App Registration

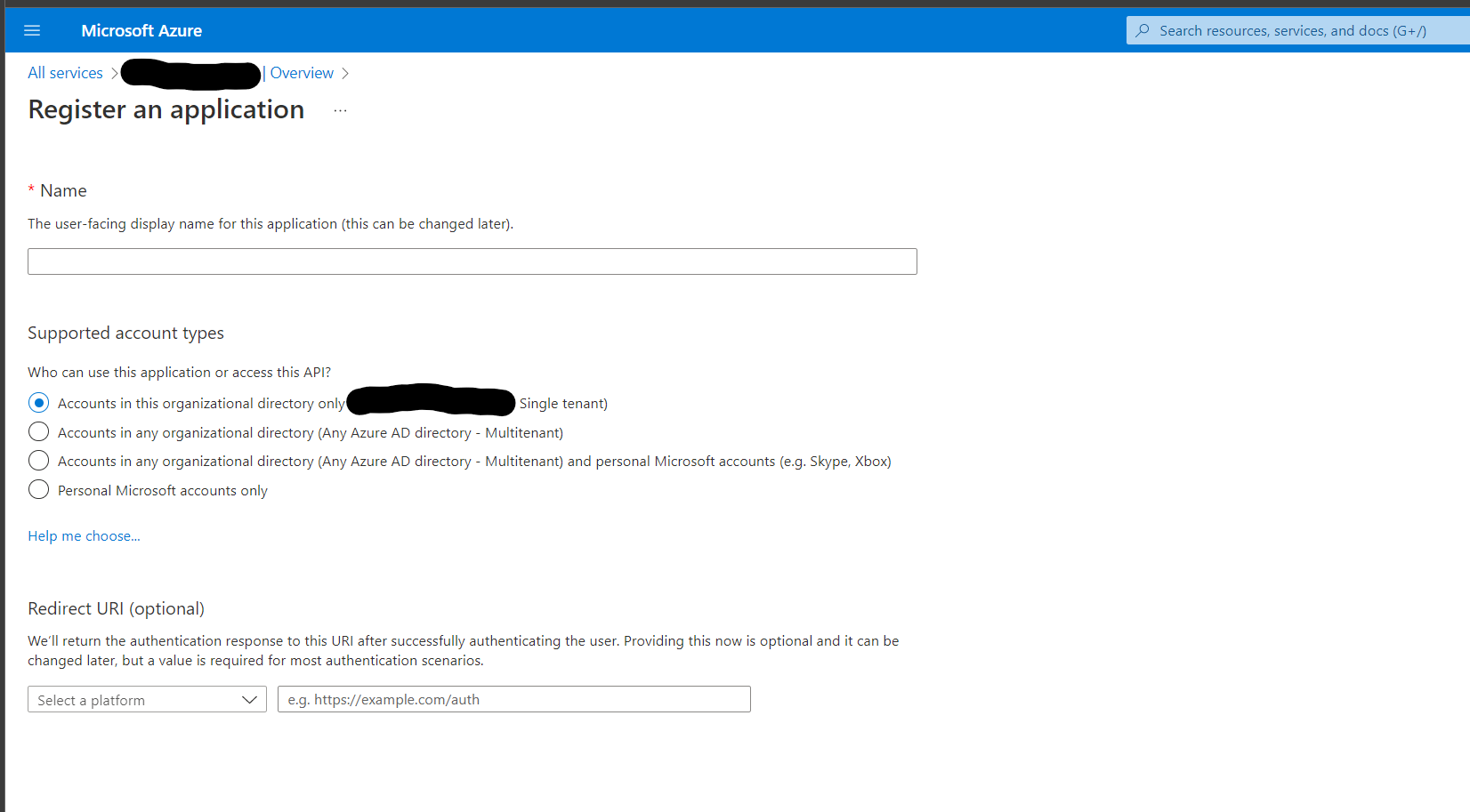

Give this app a human-readable name such as Kasm Workspaces

App Registration

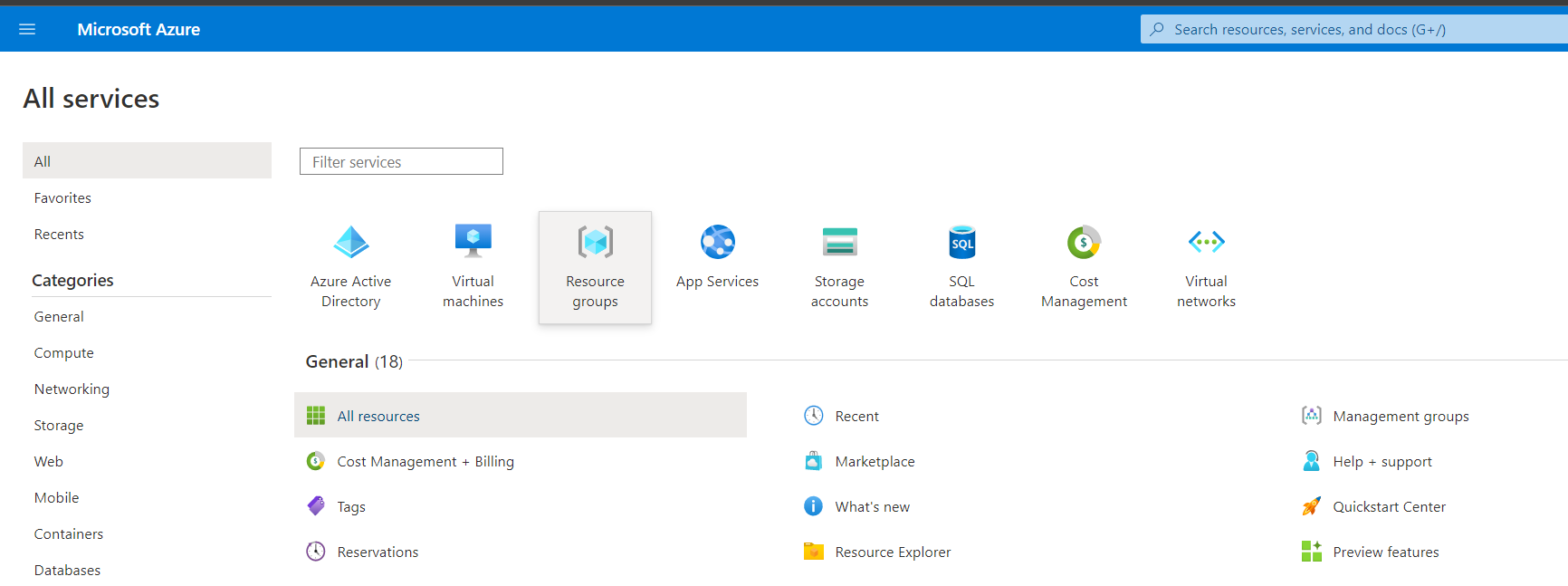

Go to Resource Groups and select the Resource Group that Kasm will autoscale in.

Azure Resource Groups

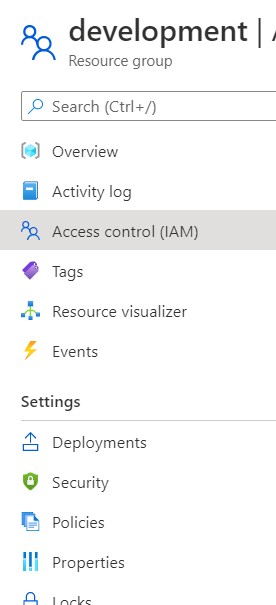

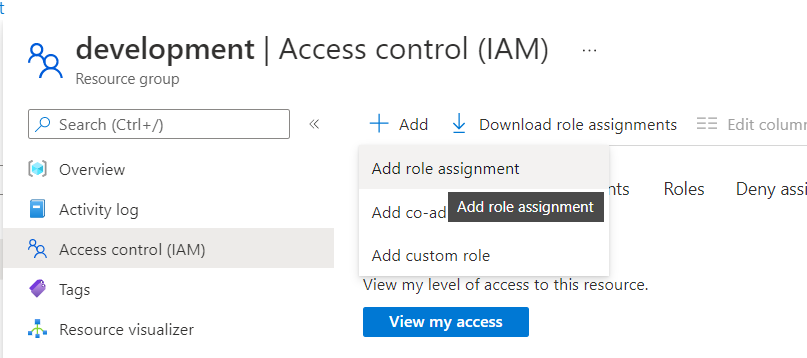

Select Access Control (IAM)

Access Control

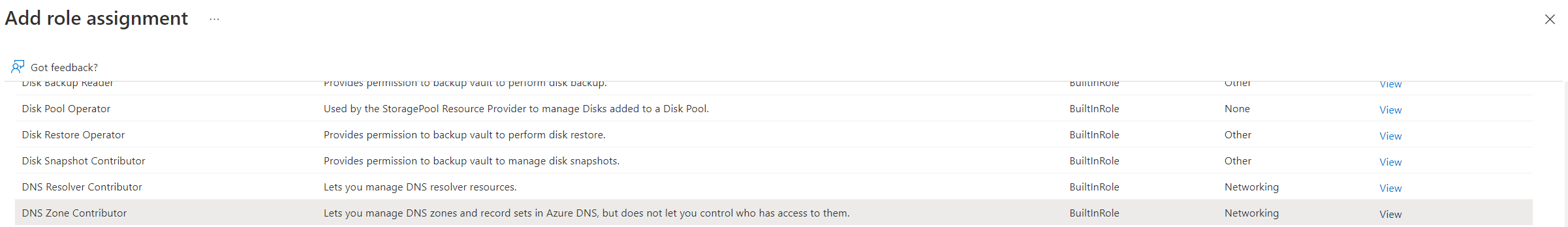

From the Add drop down select Add role assignment

Add Role Assignment

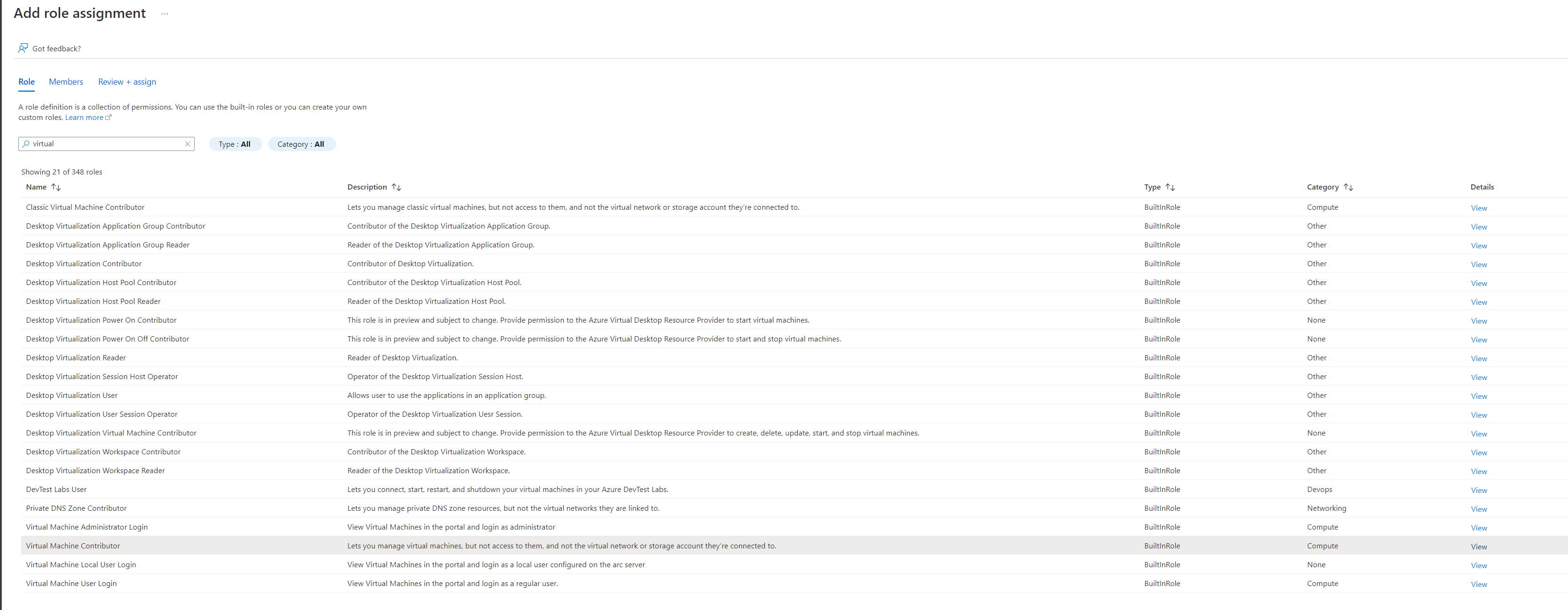

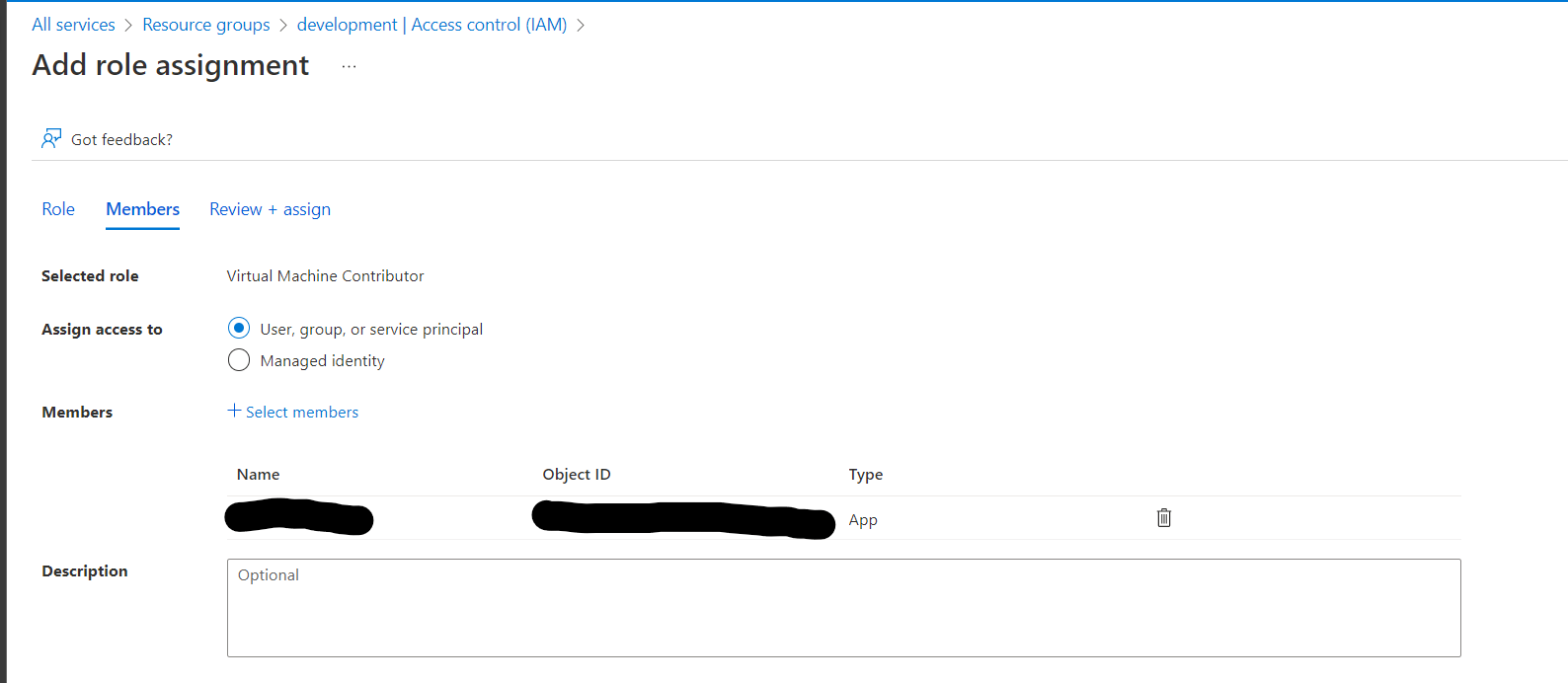

The app created in Azure will need two roles, first select the Virtual Machine Contributor role, then on the next page select the app by typing in the name e.g. Kasm Workspaces

Virtual Machine Contributor

Assign Contributor

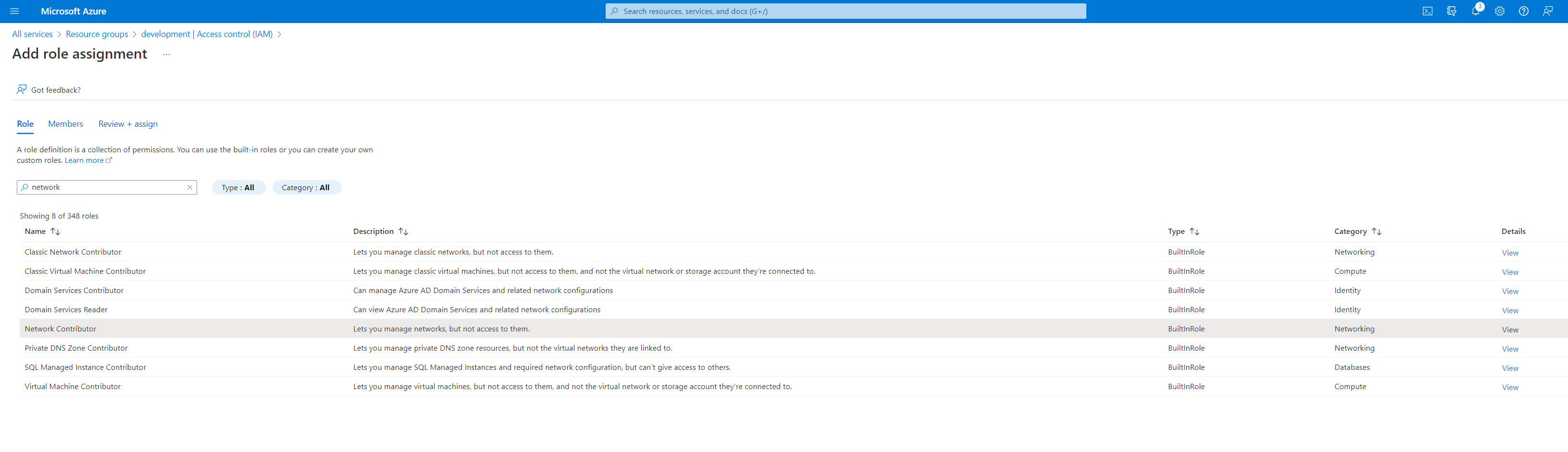

Go through this process again to add the Network Contributor and the DNS Zone Contributor roles

Network Contributor

DNS Zone Contributor

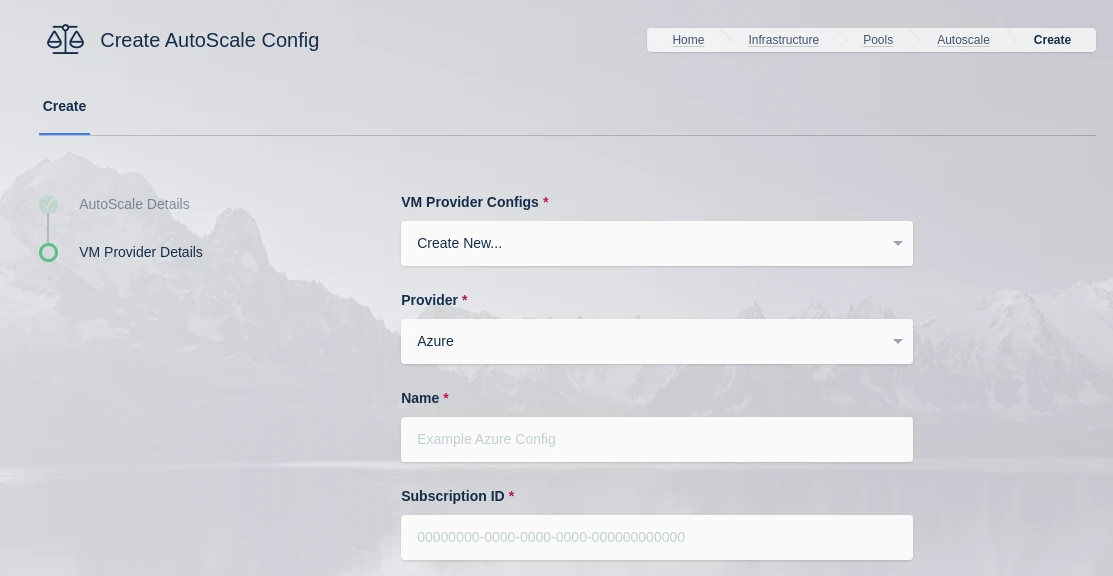

Azure VM Settings

A number of settings are required to be defined to use this functionality. The Digital Ocean settings appear in the Pool configuration when the feature is licensed.

Azure VM

Name |

Description |

|---|---|

Name |

A name to use to identify the config. |

Subscription ID |

The Subscription ID for the Azure Account.

This can be found in the Azure portal by searching for Subscriptions in the search bar in Azure home then selecting the subscription to use.

(e.g |

Resource Group |

The Resource Group the DNS Zone and/or Virtual Machines belong to (e.g |

Tenant ID |

The Tenant ID for the Azure Account.

This can be found in the Azure portal by going to Azure Active Directory using the search bar in Azure home.

(e.g |

Client ID |

The Client ID credential used to auth to the Azure Account.

Client ID can be obtained by registering an application within Azure Active Directory.

(e.g |

Client Secret |

The Client Secret credential created with the registered applicaiton in Azure Active Directory. (e.g |

Azure Authority |

Which Azure authority to use, there are four, Azure Public Cloud, Azure Government, Azure China and Azure Germany. |

Region |

The Azure region where the Agents will be provisioned. (e.g |

Max Instances |

The maximum number of Azure VMs to provision regardless of the need for additional resources. |

VM Size |

The size configuration of the Azure VM to provision (e.g |

OS Disk Type |

The disk type to use for the Azure VM. (e.g |

OS Disk Size (GB) |

The size (in GB) of the boot volume to assign the compute instance. |

OS Image Reference (JSON) |

The OS Image Reference configuration for the Azure VMs (e.g

|

Image is Windows |

Is this a windows VM being created |

Network Security Group |

The network security group to attach to the VM (e.g |

Subnet |

The subnet to attach the VM to (e.g |

Assign Public IP |

If checked, the VM will be assigned a public IP. If no public ip IP is assigned the VM must ne attached to a standard load balancer of the subnet must have a NAT Gateway or user-defined route (UDR). If a public IP is used, the subnet must not also include a NAT Gateway. Reference |

Tags (JSON) |

A JSON dictionary of custom tags to assign to the VMs (e.g |

OS Username |

The login username to assign to the new VM (e.g |

OS Password |

The login password to assign to the new VM. Note: Password authentication is disabled for SSH by default |

SSH Public Key |

The SSH public key to install on the VM for the defined user: (e.g |

Agent Startup Script |

When instances are provisioned, this script is executed and is responsible for installing and configuring the Kasm Agent. |

Config Override (JSON) |

Custom configuration may be added to the provision request for advanced use cases. The emitted json structure is visible by clicking JSON View when inspecting the VM in the Azure console.

The keys in this configuration can be used to update top level keys within the emitted json config (e.g |

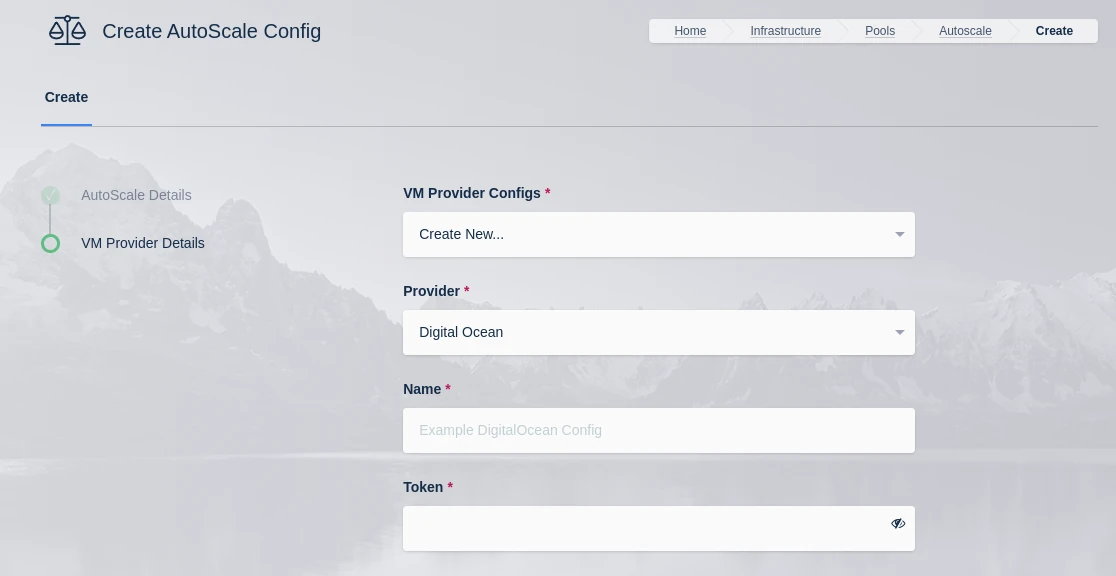

Digital Ocean Settings

Note

A detailed guide on Digital Ocean AutoScale configuration is avaialble Here

Warning

Please review Tag Does Not Exist Error for known issues and workarounds

Digital Ocean VM Provider

Name |

Description |

|---|---|

Name |

A name to use to identify the config. |

Token |

The token to use to connect to this VM |

Max Droplets |

The maximum number of Digital Ocean droplets to provision , regardless of whether more are needed to fulfill user demand. |

Region |

The Digital Ocean Region where droplets should be provisioned. (e.g nyc3) |

Image |

The Image to use when creating droplets. (e.g docker-24-04). See Digital Ocean documentation for more details. |

Droplet Size |

The droplet size configuration (e.g c-2). See https://slugs.do-api.dev for more details |

Tags |

A tag(s) to assign the droplet when it is created. This should be a comma separated list of tags. |

SSH Key Name |

The SSH Key to assign to the newly created droplets. The SSH Key must already exist in the Digital Ocean Account. |

Firewall Name |

The name of the Firewall to apply to the newly created droplets. This Firewall must already exist in the Digital Ocean Account. Go to your Digital Ocean dashboard -> “Networking” -> “Firewalls” to view existing firewalls or create a new firewall |

Startup Script |

When droplets are provisioned this script is executed. The script is responsible for installing and configuring the Kasm Agent. Example scripts can be found on our GitHub repository |

Tag Does Not Exist Error

Upon first testing AutoScaling with Digital Ocean, an error similar to the following may be presented:

Future generated an exception: tag zone:abc123 does not exist

traceback:

..

File "digitalocean/Firewall.py", line 225, in add_tags

File "digitalocean/baseapi.py", line 196, in get_data

digitalocean.DataReadError: tag zone:abc123 does not exist

process: manager_api_server

This error occurs when Kasm Workspaces tries to assign a unique tag based on the Zone Id to the Digital Ocean Firewall.

If that tag does not already exist in Digital Ocean, the operation will fail and present the error.

To workaround the issue, manually create a tag matching the one specified in the error (e.g zone:abc123) via

the Digital Ocean console. This can be done via API, or simply creating the tag on a temporary Droplet.

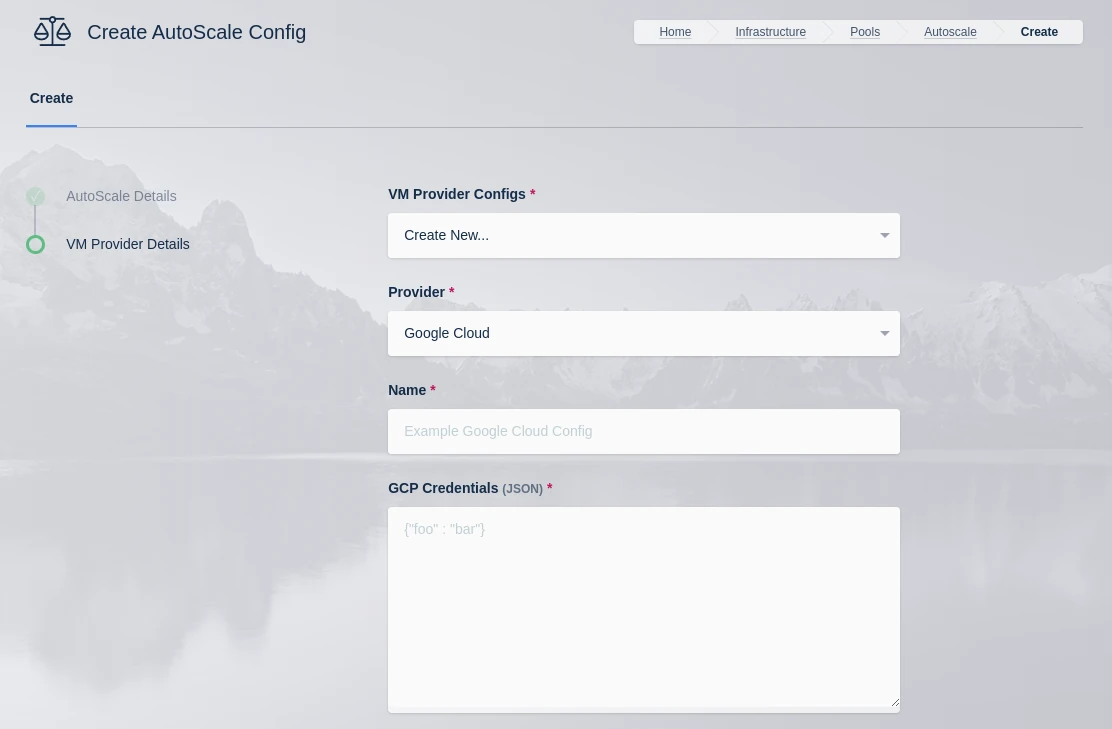

Google Cloud (GCP) Settings

Note

A detailed guide on GCP AutoScale configuration is avaialble Here

GCP VM Provider

Name |

Description |

|---|---|

Name |

An identifying name for this provider configuration e.g. Google Cloud (GCP) Docker Agent Autoscale Provider |

GCP Credentials |

The JSON formatted credentials for the service account used to authenticate with GCP: Ref |

Max Instances |

The maximum number of GCP compute instances to provision regardless of the need for additional resources. |

Project ID |

The Google Cloud Project ID (e.g pensive-voice-547511) |

Region |

The region to provision the new compute instances. (e.g us-east4) |

Zone |

The zone the new compute instance will be provisioned in (e.g us-east4-b) |

Machine Type |

The Machine type for the GCP compute instances. (e.g e2-standard-2) |

Machine Image |

The Machine Image to use for the new compute instance. (e.g projects/ubuntu-os-cloud/global/images/ubuntu-2004-focal-v20211212) |

Boot Volume GB |

The size (in GB) of the boot volume to assign the compute instance. |

Disk Type |

The disk type for the new instance. (e.g pd-ssd, pd-standard, etc.) |

Customer Managed Encryption Key (CMEK) |

The optional path to the Customer Managaged Encryption Key (CMEK) (e.g projects/pensive-voice-547511/locations/global/keyRings/my-keyring/cryptoKeys/my-key |

Network |

The path of the Network to attach the new instance. (e.g projects/pensive-voice-547511/global/networks/default) |

Sub Network |

The path of the Sub Network to attach the new instance.(e.g projects/pensive-voice-547511/regions/us-east4/subnetworks/default) |

Public IP |

If checked, a public IP will be assigned to the new instances |

Network Tags (JSON) |

A JSON list of the Network Tags to assign the new instance. (e.g |

Custom Labels (JSON) |

A JSON dictionary of Custom Labels to assign the new instance (e.g |

Metadata (JSON) |

A JSON list of metadata objects to add to the instance.

(e.g |

Service Account (JSON) |

A JSON dictionary representing for a service account to attach to the instance.

(e.g |

Guest Accelerators (JSON) |

A JSON list representing the guest accelerators (e. GPUs) to attach to the instance.

(e.g |

GCP Config Override (JSON) |

A JSON dictionary that can be used to customize attributes of the VM request. The only attributes that cannot be overridden

are |

VM Installed OS Type |

The family of the OS installed on the VM (e.g. linux or windows). |

Startup Script Type |

The type of startup script to execute, this determines the key used when creating the GCP startup script metadata. Windows Startup Scripts Linux Startup Scripts |

Startup Script |

When instances are provisioned, this script is executed and is responsible for installing and configuring the Kasm Agent. Bash is supported on Linux instances and Powershell for Windows instance. Example scripts can be found on our Github repo |

Note on Updating Existing Google Cloud Providers (GCP)

Please review the settings for all existing Google Cloud Providers (GCP). Two new fields were added; VM Installed OS Type

which defaults to Linux, and Startup Script Type which defaults to Bash Script. If the existing provider is configured

with a Windows VM it will not successfully launch the startup script without changing these values.

Harvester Settings

Note

A detailed guide on Harvester AutoScale configuration is avaialble Here

Setting |

Description |

|---|---|

Name |

An identifying name for this provider configuration e.g. Harvester Docker Agent Autoscale Provider |

Max Instances* |

The maximum number of autoscale instances to be provisioned, regardless of other settings |

Host |

The address of the Harvester instance, from the KubeConfig file (e.g. https://harvester.example.com/k8s/clusters/local) |

SSL Certificate |

The Harvester certificate as a base64 encoded string, from the KubeConfig file |

API Token |

The API token for authentication to Harvester, from the KubeConfig file |

VM Namespace |

The name of the Harvester namespace where the VMs will be provisioned |

VM SSH Public Key |

A public key to add to the autoscale agents, this is then provided as {ssh_key} for use in the startup script |

Cores |

The number of CPU cores to configure for the autoscale agents |

Memory |

The amount of memory in GiB for the autoscale agents |

Disk Image |

The name of the Harvester image to use for autoscale agents. See Disk Image for more details. |

Disk Size |

The size of the disk in GiB to use for autoscale agents |

Network Type |

The network type for the autoscale agents (pod or multus) |

Interface Type |

The interface type for the autoscale agents (masquerade or bridge) |

Network Name |

The name of the network to connect to the autoscale agents to (multus network type only) |

Startup Script |

cloud-init, Bash, or Powershell script to run after agent creation, typically to install the Kasm Agent and/or any other runtime dependencies you may have. Example scripts can be found on our GitHub repository. Make sure to use the correct script based on the target OS (bash/cloud-init for Linux and Powershell for Windows). |

Configuration Override |

A optional config override that contains a complete YAML manifest file used when provisioning the autoscale agents |

Enable TPM |

Enable TPM for the autoscale agents |

Enable EFI Boot |

Enable the EFI bootloader for the autoscale agents |

Enable Secure Boot |

Enable Secure Boot for the autoscale agents (required EFI Boot to be enabled) |

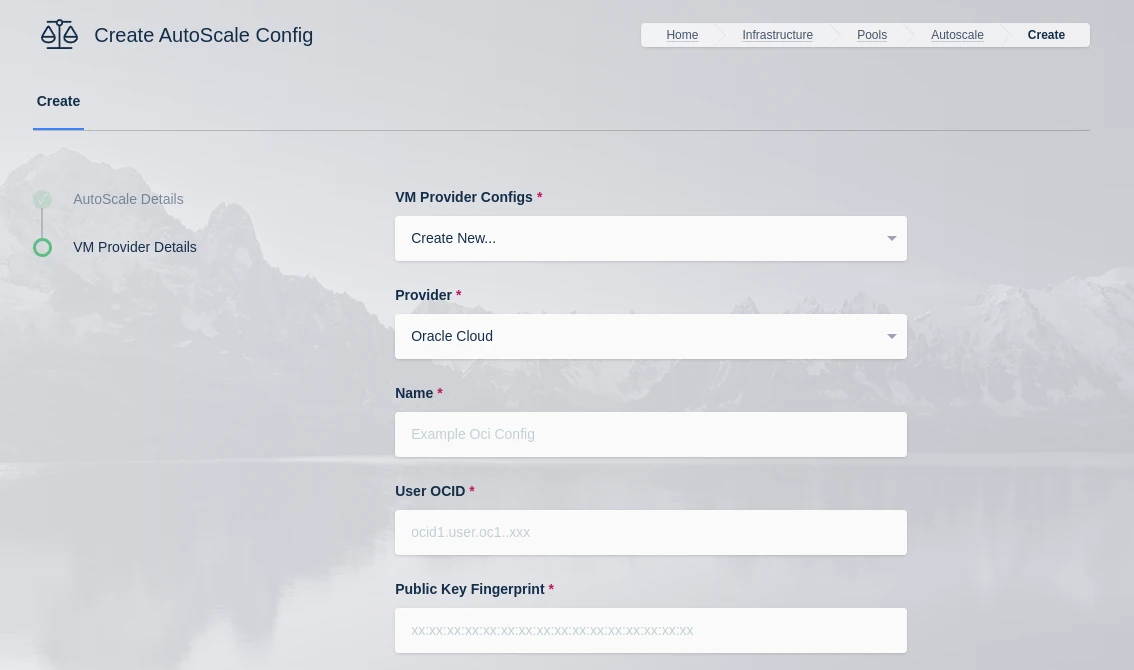

Oracle Cloud (OCI) Settings

Note

A detailed guide on OCI AutoScale configuration is avaialble Here

OCI VM Provider

Name |

Description |

|---|---|

Name |

A name to use to identify the config. |

User OCID |

The OCID of the user to authenticate with the OCI API. (e.g ocid1.user.oc1..xyz). You can find this by going to your OCI dashboard -> Click on your Profile -> You can find your user OCID here. |

Public Key Fingerprint |

The public key fingerprint of the authenticated API user created in OCI. (e.g xx:yy:zz:11:22:33) |

Private Key |

The private key (PEM format) of the authenticated API user created in OCI. |

Region |

The OCI Region name. (e.g us-ashburn-1). See Regions for the list |

Tenancy OCID |

The Tenancy OCID for the OCI account. (e.g ocid1.tenancy.oc1..xyz) |

Compartment OCID |

The Compartment OCID where the auto-scaled agents will be placed. (e.g ocid1.compartment.oc1..xyx) |

Network Security Group OCIDs (JSON) |

A JSON list of Security Group OCIDs that will be assigned to the auto-scaled agents.

(e.g |

Max Instances |

The maximum number of OCI compute instances to provision regardless of the need for available free slots. |

Availability Domains (JSON) |

A JSON list of availability domains where the OCI compute instances may be placed.

(e.g |

Image OCID |

The OCID of the Image to use when creating the compute instances. (e.g ocid1.image.oc1.iad.xyz) See OCI Image Families for the list |

Shape |

The name of the shape used for the created compute instances. (e.g VM.Standard.E4.Flex) See OCI Compute Shapes for the list |

Flex CPUs |

The number of OCPUs to assign the compute instance. This is only applicable when a Flex shape is used. |

Burstable Base CPU Utilization |

The baseline percentage of a CPU Core that can be use continuously on a burstable instance (Select 100% to use a non-burstable instance). Reference. |

Flex Memory GB |

The amount of memory (in GB) to assign the compute instance. This is only applicable when a Flex shape is used. |

Boot Volume GB |

The size (in GB) of the boot volume to assign the compute instance. |

Boot Volume VPUs Per GB |

The Volume Performance Units (VPUs) to assign to the boot volume. Values between 10 and 120 in mulitples of 10 are acceptable. 10 is the default and represents the Balanced profile. The higher the VPUs, the higher the volume performance and cost. Reference. |

Custom Tags (JSON) |

A Json dictionary of custom freeform tags to assigned the auto-scaled instances. e.g |

Subnet OCID |

The OCID of the Subnet where the auto-scaled instances will be placed. (e.g ocid1.subnet.oc1.iad.xyz) To create or find existing subnets, go to your OCI dashboard -> “Networking” -> “Virtual Cloud Networks” -> “Subnets” |

SSH Public Key |

The SSH public key to insert into the compute instances if you want to SSH into your instances. (e.g ssh-rsa XYABC) |

Startup Script |

When instances are provisioned, this script is executed and is responsible for installing and configuring the Kasm Agent. Example scripts can be found on our GitHub repository |

OCI Config Override |

A JSON dictionary that can be used to customize attributes of the VM request. An OCI Model can be specified with the “OCI_MODEL_NAME” key. Reference: OCI Python Docs and Kasm Examples. |

You can find the OCI Image ID for the version of the desired operating system in the desired region by finding navigating the OCI Image page.

OCI Config Override Examples

Below are some OCI autoscale configurations that utilize the OCI Config Override.

Disable Legacy Instance Metadata Service

Disables instance metadata service v2 for additional security.

{

"launch_instance_details": {

"instance_options": {

"OCI_MODEL_NAME": "InstanceOptions",

"are_legacy_imds_endpoints_disabled": true

}

}

}

Enable Instance Agent Plugins

A list of available plugins can be retrieved by navigating to an existing instance’s “Oracle Cloud Agent” config page. This example enables the “Vulnerability Scanning” plugin.

{

"launch_instance_details": {

"agent_config": {

"OCI_MODEL_NAME": "LaunchInstanceAgentConfigDetails",

"is_monitoring_disabled": false,

"is_management_disabled": false,

"are_all_plugins_disabled": false,

"plugins_config": [{

"OCI_MODEL_NAME": "InstanceAgentPluginConfigDetails",

"name": "Vulnerability Scanning",

"desired_state": "ENABLED"

}]

}

}

}

Nutanix Settings

Note

A detailed guide on Nutanix AutoScale configuration is avaialble Here

Setting |

Description |

|---|---|

Name |

An identifying name for this provider configuration e.g. Nutanix Docker Agent Autoscale Provider |

Max Instances |

The maximum number of autoscale instances to be provisioned, regardless of other settings |

Host |

The IP or FQDN of the Nutanix Prism Central server (e.g. 192.168.100.40 or nutanix.example.com) |

Port |

The listening port to the Nutanix Prism Central server. This is usually 9440 |

Username |

The name of the user that KASM will use to access the Nutanix Prism Central server |

Password |

The Password of the user that KASM will use to authenticate against the Nutanix Prism Central server |

Verify SSL |

Whether to validate SSL certificates. Set to False to enable self-signed certificates. Defaults to True |

API Version |

The API version used to communicate with Prism Central. v3 is recommended, v4 compatibility is currently in Preview |

VM Candidate Name |

The name of the VM used to clone new autoscaled VMs |

VM Cores |

The number of CPU cores to provision on the new autoscaled VMs |

VM Memory |

The amount of memory, in Gibibyte (GiB), to provision on the new autoscaled VMs |

Startup Script |

When VMs are provisioned, this script is executed and is responsible for installing and configuring the Kasm Agent for Docker Agent roles, or the Kasm Desktop Service for Windows VMs if desired. Bash scripts and cloud-config yaml formats are supported on a Linux host and Powershell scripts on Windows hosts Example scripts are available on our GitHub repository |

Proxmox Settings

Note

A detailed guide on Proxmox AutoScale configuration is avaialble Here

Setting |

Description |

|---|---|

Name |

An identifying name for this provider configuration e.g. Proxmox Docker Agent Autoscale Provider |

Max Instances |

The maximum number of autoscale instances to be provisioned, regardless of other settings |

Host |

The hostname or IP and port of your Proxmox instance (e.g. 192.168.100.40:8006) |

Username |

The name of the autoscale user in Proxmox, including the auth realm (e.g. KasmUser@pve) |

Token Name |

The name of the API token associated with the user (e.g. kasm_token and not KasmUser@pve!kasm_token) |

Token Value |

The secret value of the API token associated with the user |

Verify SSL |

Whether or not to verify the SSL certs in the Proxmox environment. Disable if you are using self-signed certs |

VMID Range Lower |

The start of the VMID range for Kasm to use for autoscale agents. Must not overlap with any other Proxmox autoscale providers configured in Kasm |

VMID Range Upper |

The end of the VMID range for Kasm to use for autoscale agents. Must not overlap with any other Proxmox autoscale providers configured in Kasm |

Full Clone |

If enabled performs a full clone rather than a linked clone. A linked clone is faster to provision but will have reduced performance compared to a full clone |

Template Name |

The name of the VM template to use when cloning new autoscale agents |

Cluster Node Name |

The name of the Proxmox node containing the VM template |

Resource Pool Name |

Specify the resource pool to use for cloning the new autoscale agents |

Storage Pool Name |

Optionally specify a storage pool to use for cloning the new autoscale agents. This requires Full Clone to be enabled |

Target Node Name |

Optionally specify a cluster node to provision new autoscale agents on (defaults to the Cluster Node Name) |

VM Cores |

The number of CPU cores to configure for the autoscale agents |

VM Memory |

The amount of memory in GiB for the autoscale agents |

Installed OS Type |

Linux or Windows |

Startup Script Path |

The absolute path to where the startup script will be uploaded and run from, typically /tmp for Linux or C:windowstemp for Windows. The path must exist on the template. |

Startup Script |

Bash (Linux) or Powershell (Windows) startup script to run after agent creation, typically to install the Kasm Agent and/or any other runtime dependencies. Example scripts are available on our GitHub repository |

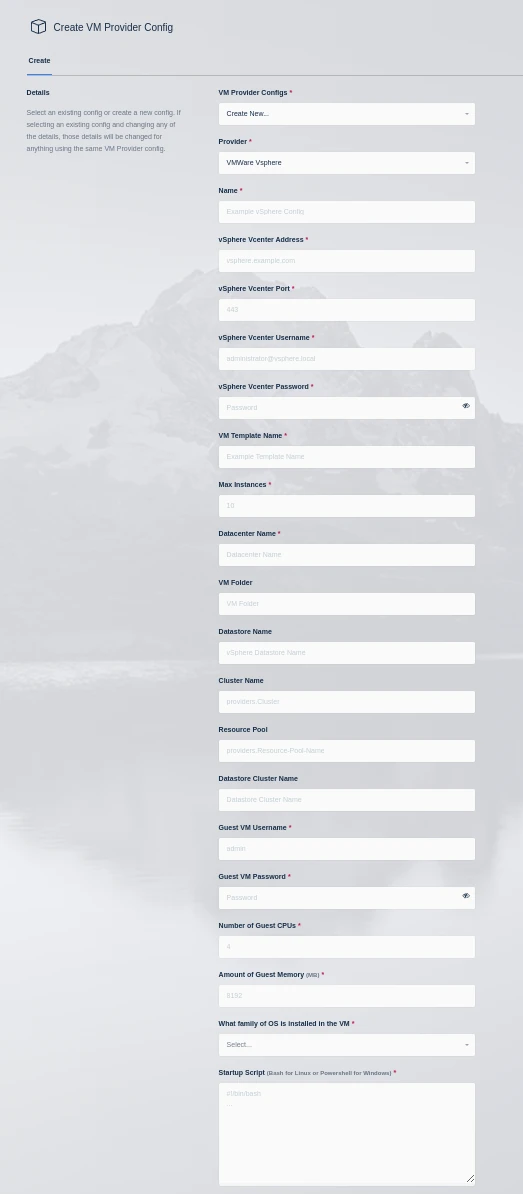

VMware vSphere Settings

Note

A detailed guide on vSphere AutoScale configuration is avaialble Here

vSphere VM Provider

Setting |

Description |

|---|---|

Name |

An identifying name for this provider configuration. |

vSphere vCenter Address |

The IP or FQDN of the VMware vSphere vCenter server to use. |

vSphere vCenter Port |

The management port of your Vcenter instance (typically 443) |

vSphere vCenter Username |

The username to use when authenticating with the vSphere vCenter server (e.g kasm-autoscale) |

vSphere vCenter Password |

The password to use when authenticating with the vSphere vCenter server. |

VM Template Name |

The name of the template VM to use when cloning new autoscaled VMs. |

Max Instances |

The maximum number of vSphere VM instances to provision regardless of the need for available free slots. |

Datacenter Name |

The datacenter to use for cloning the new vSphere VM instances. |

VM Folder |

The VM folder to use for cloning the new vSphere VM instances. This field is optional, if left blank the VM folder of the template is used. |

Datastore Name |

The datastore to use for cloning the new vSphere VM instances. This field is optional, if left blank the datastore of the template is used. |

Cluster Name |

The cluster to use for cloning the new vSphere VM instances. This field is optional, if left blank the cluster of the template is used. |

Resource Pool |

The resource pool to use for cloning the new vSphere VM instances. This field is optional, if left blank the resource pool of the template is used. |

Datastore Cluster Name |

The datastore cluster to use for cloning the new vSphere VM instances. This field is optional, if left blank the datastore cluster of the template is used. |

Guest VM Username |

The username to use for running the startup script on the new vSphere VM instance. This account should have sufficient privileges to execute all commands in the startup script. |

Guest VM Password |

The password for the Guest VM Username account. |

Number of Guest CPUs |

The number of CPUs to configure on new vSphere VM instances. This option is not dependent on the number of CPUs configured on the template. |

Amount of Guest Memory(GiB) |

The amount of memory in GibiBytes to configure on new vSphere VM instances. This option is not dependent on the amount of memory configured on the template. |

What family of OS is installed in the VM |

Whether the template OS is Linux or Windows. This is needed to ensure proper execution of the startup script. |

Startup Script |

When instances are provisioned, this script is executed and is responsible for installing and configuring the Kasm Agent. Scripts are run as bash scripts on a Linux host and Powershell scripts on a Windows host. Example scripts are available on our GitHub repository Additional troublshooting steps can be found in the Creating Templates For Use With The VMware vSphere Provider section of the server documentation. |

Permissions for vCenter service account

These are the minimum permissions that your service account requires in vCenter based on a default configuration. The account might require additional privileges depending on specific features and configurations you have in place. We advise creating a dedicated service account for Kasm Workspaces autoscaling with these permissions to enhance security and minimize potential risks.

Datastore

Allocate space

Browse datastore

Global

Cancel task

Network

Assign network

Resource

Assign virtual machine to resource pool

Virtual machine

Change Configuration

Change CPU count

Change Memory

Set annotation

Edit Inventory

Create from existing

Create new

Remove

Unregister

Guest operations

Guest operation modifications

Guest operation program execution

Guest operation queries

Interaction

Power off

Power on

Provisioning

Deploy template

Network Connectivity

The agent startup scripts utilize VMware’s guest script execution via VMware Tools. This functionality requires direct HTTPS connectivity between the Kasm Workspace Manager and the ESXi host(s) running the agent VMs.

Notes on vSphere Datastore Storage

When configuring VMware vSphere with Kasm Workspaces one important item to keep in mind is datastore storage. When clones are created VMware will attempt to satisfy the clone operation if the datastore runs out of space, any VMs that are running on that datastore will be paused until space is available. Kasm Workspaces recommends that critical management VMs such as the Vcenter server VM and cluster management VMs are on separate datastores that are not used for Kasm autoscaling.

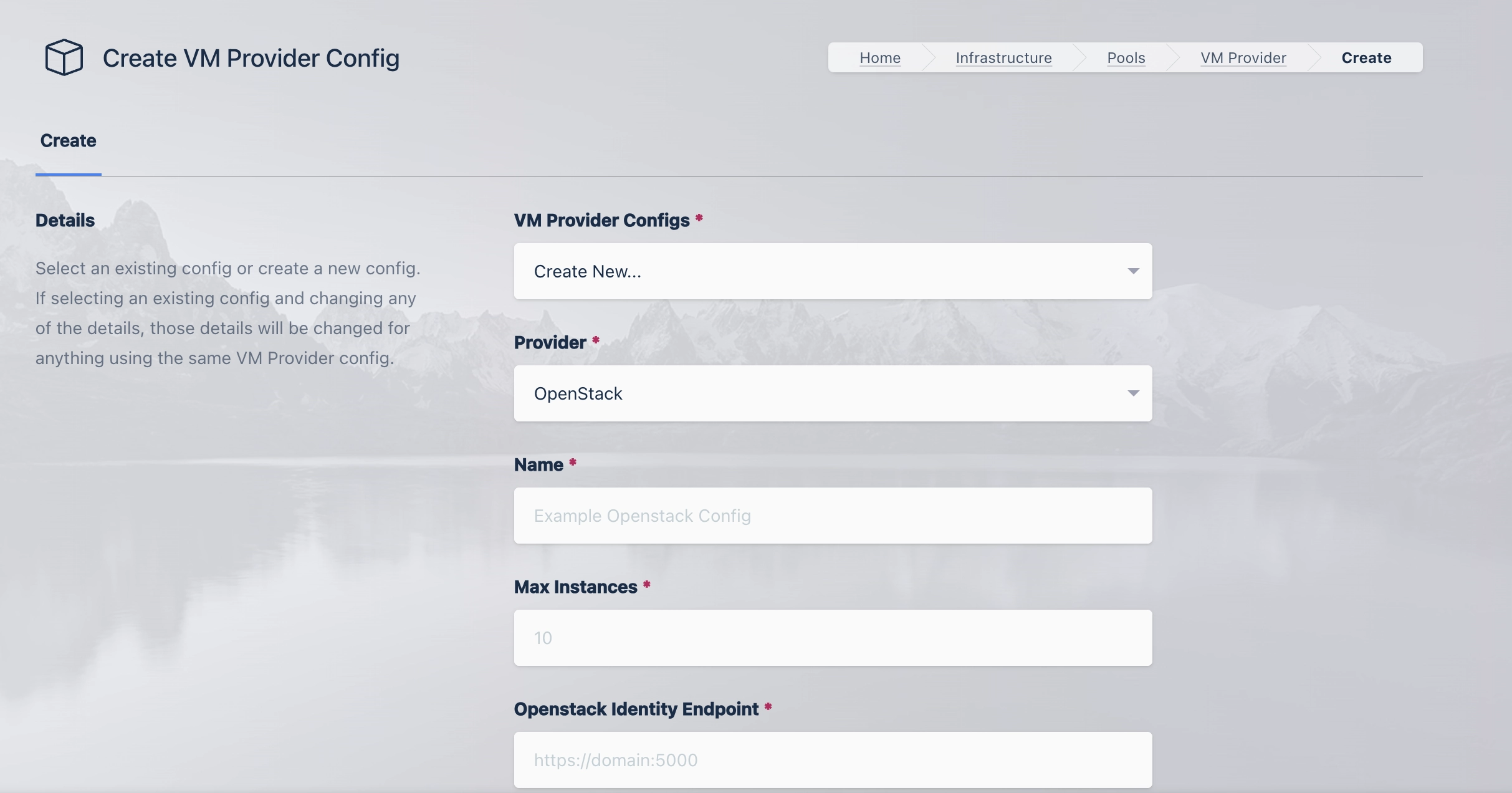

OpenStack Settings

A number of settings are required to be defined to use this functionality. The OpenStack settings appear in the Pool configuration when the feature is licensed.

The appropriate OpenStack configuration options can be found by using the “API Access” page of the OpenStack UI and downloading the “OpenStack RC File”.

OpenStack VM

Name |

Description |

|---|---|

Name |

A name to use to identify the config. |

Max Instances |

The maximum number of OpenStack compute instances to provision regardless of the need for additional resources. |

OpenStack Identity Endpoint |

The endpoint address of the OpenStack Keystone endpoint (e.g. |

OpenStack Nova Endpoint |

The endpoint address of the OpenStack Nova (Compute) endpoint (e.g. |

OpenStack Nova Version |

The version to use with the OpenStack Nova (Compute) endpoint (e.g. |

OpenStack Glance Endpoint |

The endpoint address of the OpenStack Glance (Image) endpoint (e.g. |

OpenStack Glance Version |

The version to use with the OpenStack Glance (Image) endpoint (e.g. |

OpenStack Cinder Endpoint |

The endpoint address of the OpenStack Cinder (Volume) endpoint. Note: The address contains the OpenStack Project ID

(e.g. |

OpenStack Cinder Version |

The version to use with the OpenStack Cinder (Volume) endpoint. (e.g. |

Project Name |

The name of the OpenStack Project where VMs will be provisioned. |

Authentication Method |

The kind of credential used to authenticate against the OpenStack Endpoints. |

Application Credential ID |

The Credential ID of the OpenStack Application Credential. |

Application Credential Secret |

The OpenStack Application Credential secret. |

Project Domain Name |

The Domain that OpenStack Project belongs to (e.g. |

User Domain Name |

The Domain that the OpenStack User belongs to (e.g. |

Username |

The Username of the OpenStack User used to authentication against OpenStack. |

Password |

The Password of the OpenStack User used to authenticate against OpenStack. |

Metadata |

A Json Dictionary containing the metadata tags applied to the OpenStack VMs (e.g. |

Image ID |

The ID of the Image used to provision OpenStack VMs. |

Flavor |

The name of the desired Flavor for the OpenStack VM (e.g. |

Create Volume |

Enable to create a new Block storage (Cinder) volume for the OpenStack VM. (When disabled, ephemeral Compute (Nova) storage is used.) |

Volume Size (GB) |

The desired size of the VM Volume in GB. This can only be specified when “Create Volume” is enabled. |

Volume Type |

The type of volume to use for the new OpenStack VM Volume (e.g. |

Startup Script |

When OpenStack VMs are provision this script is executed. The script is responsible for installing and configuring the Kasm Agent. |

Security Groups |

A list containing the security groups applied to the OpenStack VM (e.g. |

Network ID |

The ID of the network that the OpenStack VMs will be connected to. |

Key Name |

The name of the SSH Key used to connect to the instance. |

Availability Zone |

The Name of the Availability Zone that the OpenStack VM will be placed into. |

Config Override |

A JSON dictionary that can be used to customize attributes of the VM request |

Openstack Notes

The OpenStack provider requires that OpenStack endpoints present trusted, signed TLS certificates. This can be done through an API gateway that presents a valid certificate or through configuring valid certificates on each individual service (Reference: Openstack Docs).

Openstack Endpoints Require Trusted Certificates

The OpenStack provider requires that OpenStack endpoints present trusted, signed TLS certificates. This can be done through an API gateway that presents a valid certificate or through configuring valid certificates on each individual service (Reference: Openstack Docs.).

Application Credential Access Rules

Openstack Application credentials allow for administrators to specify Access Rules to restrict the permissions of an application credential further than a role might allow. Below is an example of the minimum set of permissions that Kasm Workspaces requires in an Application Credential

- service: volumev3 method: POST path: /v3/*/volumes - service: volumev3 method: DELETE path: /v3/*/volumes/* - service: volumev3 method: GET path: /v3/*/volumes - service: volumev3 method: GET path: /v3/*/volumes/* - service: volumev3 method: GET path: /v3/*/volumes/detail - service: compute method: GET path: /v2.1/servers/detail - service: compute method: GET path: /v2.1/servers - service: compute method: GET path: /v2.1/flavors - service: compute method: GET path: /v2.1/flavors/* - service: compute method: GET path: /v2.1/servers/*/os-volume_attachments - service: compute method: GET path: /v2.1/servers/* - service: compute method: GET path: /v2.1/servers/*/os-interface - service: compute method: POST path: /v2.1/servers - service: compute method: DELETE path: /v2.1/servers/* - service: image method: GET path: /v2/images/* - service: image method: GET path: /v2/schemas/image

KubeVirt Enabled Providers

Overview

KASM supports autoscaling in Kubernetes environments that are running KubeVirt. This includes generic k8s installations as well as GKE and Harvester deployments.

Updated Startup Scripts

We have released updated startup scripts to include KubeVirt support, the most important change is the inclusion of the qemu-agent.

https://github.com/kasmtech/workspaces-autoscale-startup-scripts/blob/develop/latest/docker_agents/ubuntu.sh

The qemu-agent installation snippet is commented out by default in the startup script, and thus to use it with KubeVirt you must first uncomment it.

Config Overrides

KASM generates VMs using a Kubernetes yaml manifest described by this API specification:

https://kubevirt.io/api-reference/main/definitions.html#_v1_virtualmachine

In the event that KASM providers do not expose a required feature, the provider configuration may be overridden.

In order to do this, the entire manifest must be stored in the provider config_override.

KASM will parse the manifest and attempt to update certain fields; the metadata will be updated so that the name field

contains a unique name, the namespace matches the namespace in the provider config, and the labels are updated to contain

various labels required for autoscale functionality. All other values will be preserved.

The runStrategy will be set to Always and the hostname will be set to match the unique name.

In order to support startup scripts, a disk with the following settings will be appended to the disks:

- name: config-drive-disk

cdrom:

bus: sata

readonly: true

This points to a volume that will be appended to the volumes with the following settings:

- name: config-drive-disk

cloudInitConfigDrive:

secretRef:

name: f'{name}-secret'

The manifest will be used to spawn multiple VMs, thus using unique names for certain resources such as PVCs is necessary.

To support this the provider will replace any instance of $KASM_NAME with a unique name, to use this for multiple different types of

resources you can append to the name such as this suggested PVC example:

volumes:

- name: disk-0

persistentVolumeClaim:

claimName: $KASM_NAME-pvc

Again, due to the fact that the manifest will be spawning multiple VMs it is necessary to utilize a disk cloning method

such as the dataVolume feature of the Containerized Data Importer interface created by KubeVirt.

Caveats

The k8s namespace for KASM resources is configured on the provider, this should not be updated while the provider is in use. Doing so can result in unpredictable behavior and orphaned resources. If it is necessary to change the k8s namespace, a new autoscale and provider should be created with the new namespace and the old autoscale configuration should be updated setting the standby cores, gpus and memory to 0. This should allow new resources to transition to the new provider.

It is possible for orphaned k8s objects to exist for various reasons, such as power loss of the KASM server during VM creation. Currently, these objects must be cleaned up manually. The k8s objects that KASM creates are: virtualmachines, secrets and PVCs.

The KASM kubevirt provider does not work out of the box with the following Kubernetes deployments:

KIND, the default KIND deployment uses local-path-provisioning for storage which does not support CDI cloning.

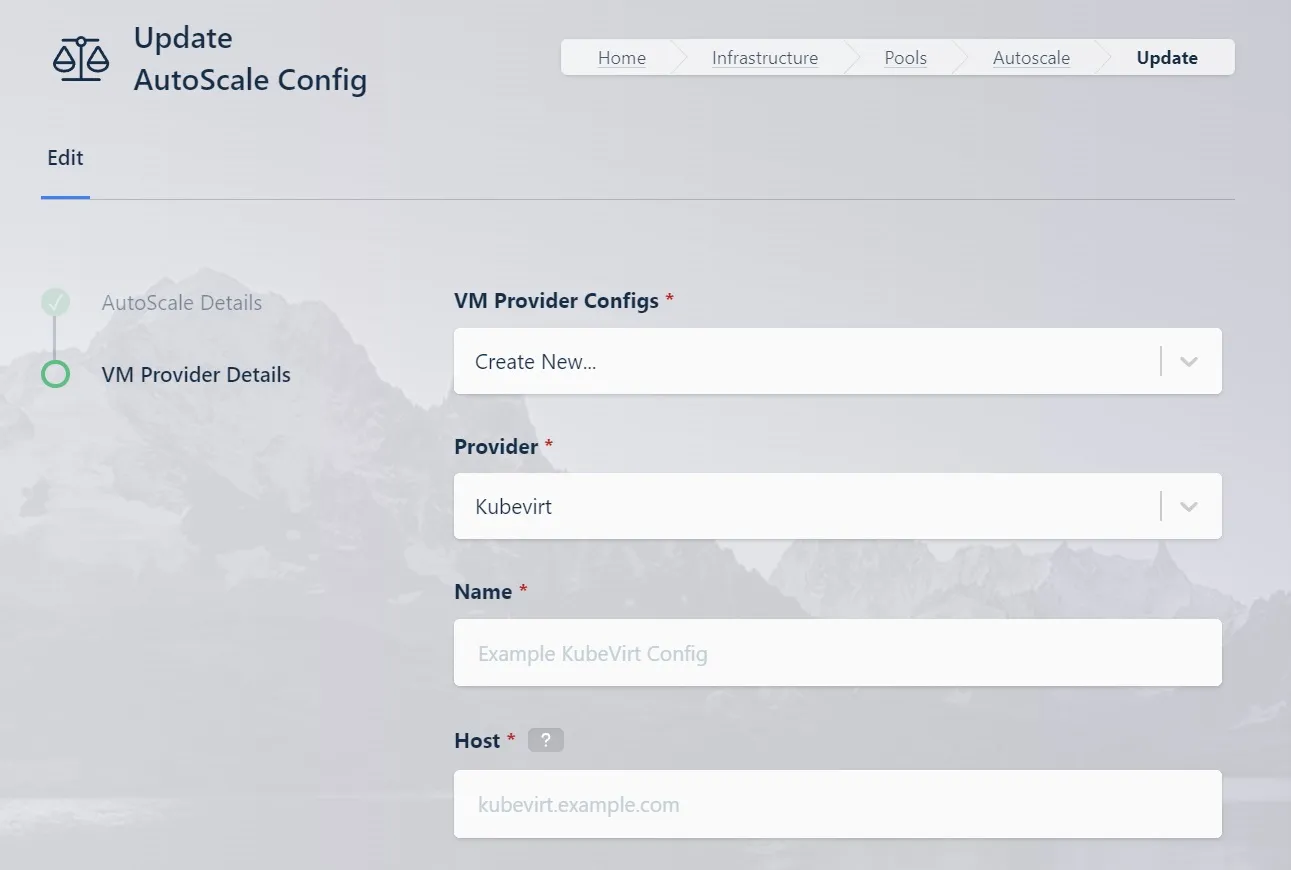

KubeVirt Settings

A number of settings are required to be defined to use this functionality. The KubeVirt settings appear in the Pool configuration when the feature is licensed.

The appropriate Kubernetes configuration options can be found by downloading the KubeConfig file provided by your Kubernetes installation.

KubeVirt VM

Name |

Description |

|---|---|

Name |

A name to use to identify the config. |

Max Instances |

The maximum number of KubeVirt compute instances to provision regardless of the need for additional resources. |

Kubernetes Host |

The address of the kubernetes cluster (e.g. |

Kubernetes SSL Certificate |

The kubernetes cluster certificate as a base64 encoded string of a PEM file. |

Kubernetes API Token |

The bearer token for authentication to the kubernetes cluster. |

VM Namespace |

The name of the Kubernetes namespace where the VMs will be provisioned. |

VM SSH Public Key |

The Public SSL Certificate used to access the VM. |

VM Cores |

The nubmer of CPU cores to configure for the VM. |

VM Memory |

The amount of memory in Gibibyte (GiB) to configure for the VM. |

VM Disk Size |

The size of the disk in Gibibyte (GiB) to configure for the VM. |

VM Disk Source |

The name of the source PVC containing a cloud ready disk image used to clone a new disk volume |

VM Interface Type |

The interface type for the VM (e.g. masquerade or bridge). |

VM Network Name |

The name of the network interface. If using a multus network, it should match the name of that network. |

VM Network Type |

The network type for the VM (e.g. pod or multus). |

VM Startup Script |

When VMs are provisioned, this script is executed and is responsible for installing and configuring the Kasm Agent. Scripts are ran as bash scripts on a Linux host and Powershell scripts on a Windows host. Additional troublshooting steps can be found in the Creating Templates For Use With The VMware vSphere Provider section of the server documentation. |

Configuration Override |

A config override that contains a complete YAML manifest file used when provisioning the VM. |

Enable TPM |

Enable TPM for VM. |

Enable EFI Boot |

Enable the EFI boot loader for the VM. |

Enable Secure Boot |

Enable secure boot for the VM (requires EFI boot to be enabled). |

KubeVirt GKE Setup Example

This example assumes you have a GKE account, a Linux development environment, and an existing KASM deployment (ref).

The example will assume the following variables:

cluster name

kasmzone

us-central1region

us-central1-cmachine-type

c3-standard-8namespace

kasmstorage class name

kasm-storagepvc name

kasm-ubuntu-focalpvc size

25GiBpvc image

focal-server-cloudimg-amd64.img

These should be replaced with values more appropriate to your installation.

Ensure GKE is configured

Install the gcloud console (ref):

curl -O https://dl.google.com/dl/cloudsdk/channels/rapid/downloads/google-cloud-cli-linux-x86_64.tar.gz

tar -xf google-cloud-cli-linux-x86_64.tar.gz

./google-cloud-sdk/install.sh -q --path-update true --command-completion true

. ~/.profile

Initialize the gcloud console (ref):

gcloud init --no-launch-browser

gcloud config set compute/region us-central1

gcloud config set compute/zone us-central1-c

Enable the GKE engine API (ref):

gcloud services enable container.googleapis.com

Create a cluster with nested virtualization support (ref):

gcloud container clusters create kasm \

--enable-nested-virtualization \

--node-labels=nested-virtualization=enabled \

--machine-type=c3-standard-8

Install the kubectl gcloud component (ref):

gcloud components install kubectl

Configure GKE kubectl authentication (ref):

gcloud components install gke-gcloud-auth-plugin

gcloud container clusters get-credentials kasm \

--region=us-central1-c

Create the KASM namespace:

kubectl create namespace kasm

Install KubeVirt

Note: The current v1.3 release of KubeVirt introduced a bug preventing GKE support. You must install the v1.2.2 release.

Install KubeVirt (ref):

#export RELEASE=$(curl https://storage.googleapis.com/kubevirt-prow/release/kubevirt/kubevirt/stable.txt)

export RELEASE=v1.2.2

kubectl apply -f https://github.com/kubevirt/kubevirt/releases/download/${RELEASE}/kubevirt-operator.yaml

kubectl apply -f https://github.com/kubevirt/kubevirt/releases/download/${RELEASE}/kubevirt-cr.yaml

Wait for it to be ready. This may time out multiple (2-3) times before returning successfully:

kubectl -n kubevirt wait kv kubevirt --for condition=Available

Install the Containerized Data Importer extension

In order to support efficient cloning KubeVirt requires the Containerized Data Importer extension (ref).

Install the CDI extension:

export VERSION=$(curl -s https://api.github.com/repos/kubevirt/containerized-data-importer/releases/latest | grep '"tag_name":' | sed -E 's/.*"([^"]+)".*/\1/')

kubectl create -f https://github.com/kubevirt/containerized-data-importer/releases/download/$VERSION/cdi-operator.yaml

kubectl create -f https://github.com/kubevirt/containerized-data-importer/releases/download/$VERSION/cdi-cr.yaml

Create a new storage class that uses the GKE CSI driver and has the

Immediatevolume binding mode:

kubectl apply -f - <<EOF

allowVolumeExpansion: true

apiVersion: storage.k8s.io/v1

kind: StorageClass

metadata:

annotations:

components.gke.io/component-name: pdcsi

components.gke.io/component-version: 0.18.23

components.gke.io/layer: addon

storageclass.kubernetes.io/is-default-class: "true"

labels:

addonmanager.kubernetes.io/mode: EnsureExists

k8s-app: gcp-compute-persistent-disk-csi-driver

name: kasm-storage

parameters:

type: pd-balanced

provisioner: pd.csi.storage.gke.io

reclaimPolicy: Delete

volumeBindingMode: Immediate

EOF

Mark any existing default storage classes as non-default:

kubectl patch storageclass standard-rwo -p '{"metadata": {"annotations":{"storageclass.kubernetes.io/is-default-class":"false"}}}'

Create local kubectl authentication

Currently, in order to authenticate with the GKE cluster KASM needs a local kubectl authentication account.

Create a service account:

KUBE_SA_NAME="kasm-admin"

kubectl create sa $KUBE_SA_NAME

kubectl create clusterrolebinding $KUBE_SA_NAME --clusterrole cluster-admin --serviceaccount default:$KUBE_SA_NAME

Manually create a long-lived API token for the service account

kubectl apply -f - <<EOF

apiVersion: v1

kind: Secret

metadata:

name: $KUBE_SA_NAME-secret

annotations:

kubernetes.io/service-account.name: $KUBE_SA_NAME

type: kubernetes.io/service-account-token

EOF

Generate the kubeconfig

KUBE_DEPLOY_SECRET_NAME=$KUBE_SA_NAME-secret

KUBE_API_EP=`gcloud container clusters describe kasm --format="value(privateClusterConfig.publicEndpoint)"`

KUBE_API_TOKEN=`kubectl get secret $KUBE_DEPLOY_SECRET_NAME -o jsonpath='{.data.token}'|base64 --decode`

KUBE_API_CA=`kubectl get secret $KUBE_DEPLOY_SECRET_NAME -o jsonpath='{.data.ca\.crt}'`

echo $KUBE_API_CA | base64 --decode > tmp.deploy.ca.crt

touch $HOME/local.cfg

export KUBECONFIG=$HOME/local.cfg

kubectl config set-cluster local --server=https://$KUBE_API_EP --certificate-authority=tmp.deploy.ca.crt --embed-certs=true

kubectl config set-credentials $KUBE_SA_NAME --token=$KUBE_API_TOKEN

kubectl config set-context local --cluster local --user $KUBE_SA_NAME

kubectl config use-context local

Validate your kubeconfig works

kubectl version

It should display both the client and server versions, if it does not you can retrieve the current config used by kubectl to ensure it is using the correct config

kubectl config view

Ensure that it is using the local settings you generated and not an existing GKE configuration.

Upload a PVC

The virtctl tool can be used to upload a VM image. Both the raw and qcow2 formats are supported. The image should be cloud-ready, with cloud-init configured.

Download and install the

virtctltool:

VERSION=$(kubectl get kubevirt.kubevirt.io/kubevirt -n kubevirt -o=jsonpath="{.status.observedKubeVirtVersion}")

ARCH=$(uname -s | tr A-Z a-z)-$(uname -m | sed 's/x86_64/amd64/') || windows-amd64.exe

echo ${ARCH}

curl -L -o virtctl https://github.com/kubevirt/kubevirt/releases/download/${VERSION}/virtctl-${VERSION}-${ARCH}

chmod +x virtctl

sudo install virtctl /usr/local/bin

Expose the CDI Upload Proxy by executing the following command in another terminal:

kubectl -n cdi port-forward service/cdi-uploadproxy 8443:443

Use the

virtctltool to upload the VM image:

virtctl image-upload pvc kasm-ubuntu-focal --uploadproxy-url=https://localhost:8443 --size=25Gi --image-path=./focal-server-cloudimg-amd64.img --insecure -n kasm

Ensure KASM is configured

Configure KASM

Add a license

Set the default zone upstream address to the address of the KASM host

Add a Pool

NameKubeVirt PoolTypeDocker Agent

Add an Auto-Scale config

NameKubeVirt AutoScaleAutoScale TypeDocker AgentPoolKubeVirt PoolDeployment ZonedefaultStandby Cores4Standby GPUs1Standby Memory4000Downscale Backoff600Agent Cores Override4Agent GPUs Override1Agent Memory Override4

Create a new VM Provider

ProviderKubeVirtNameKubeVirt ProviderMax Instances10Hostpaste server URI from kubeconfigSSL Certificatepaste certiciate-authority-data from kubeconfigAPI Tokenpaste token from kubeconfigVM NamespacekasmVM Public SSH Keypaste user public ssh keyCores4Memory4Disk Sourcekasm-ubuntu-focalDisk Size30Interface TypebridgeNetwork NamedefaultNetwork TypepodStartup Scriptpaste ubuntu docker agent startup script